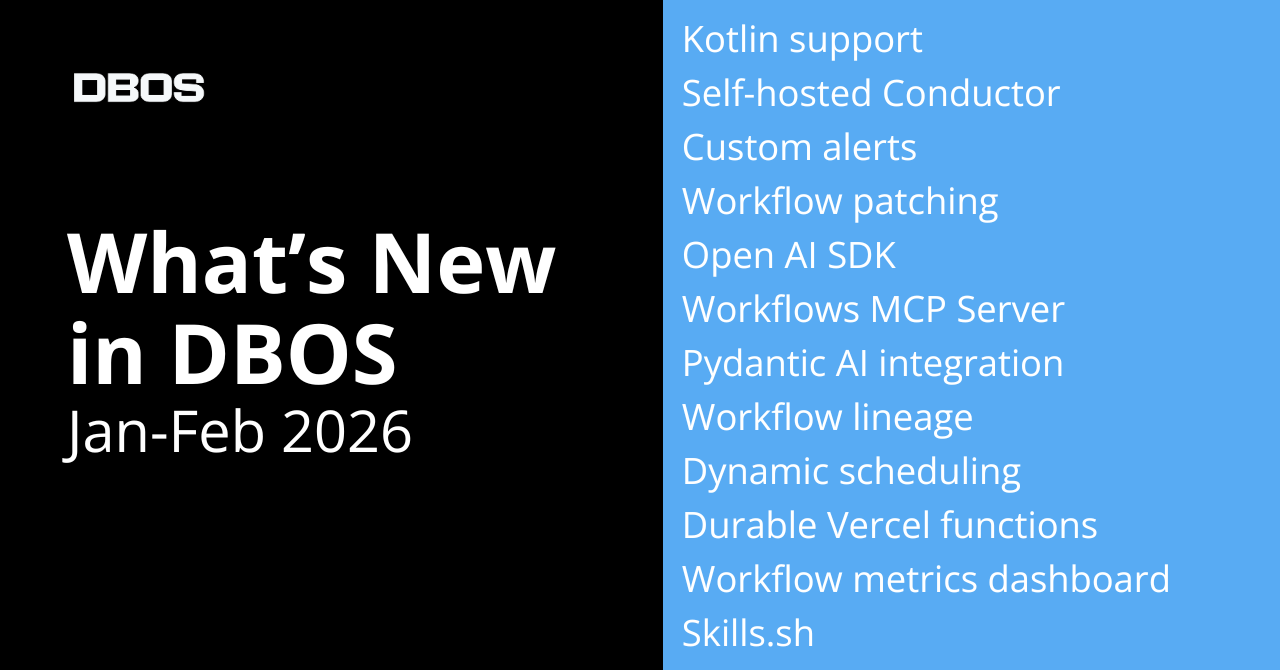

The past two months were all about durable AI. We introduced Kotlin support, launched configurable alerting and the new DBOS MCP server in Conductor, and expanded our ecosystem with powerful new integrations. Here’s a summary of what’s new:

New in DBOS Transact open source libraries

- Kotlin support

- Workflow patching

- Workflow lineage tracking

- Dynamic workflow scheduling

- Explicit queue listening

New in DBOS Conductor

- Self-hosted Conductor - run it anywhere

- MCP server - agentic workflow troubleshooting

- Custom alerting

- New metrics dashboard

New integrations

- Open AI agents SDK

- Pydantic AI integration

- Durable Vercel apps template (with persistence to Supabase)

- DBOS Transact added to Skills.sh

Read on for more details on each of these enhancements.

New Features in DBOS Transact

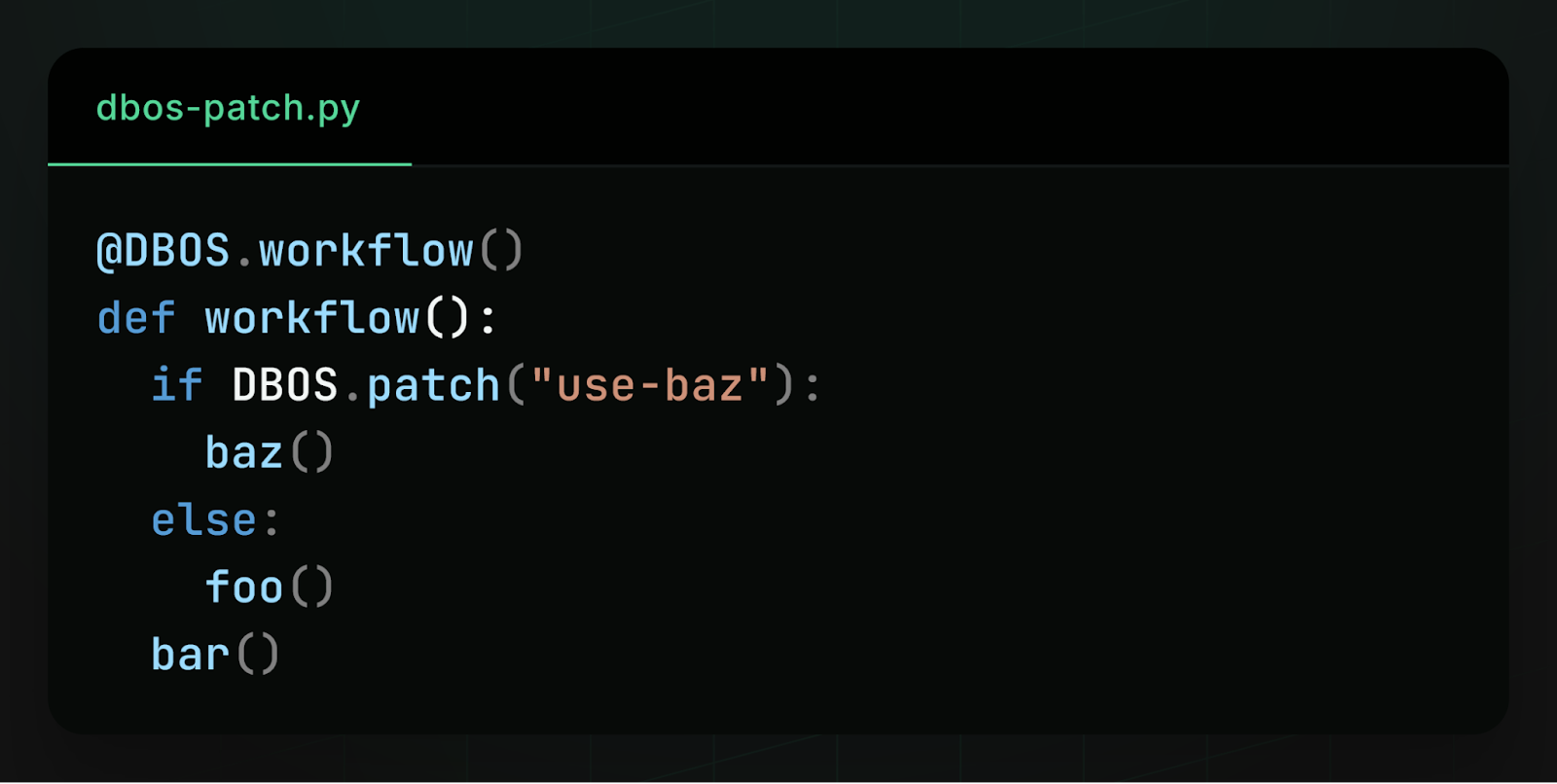

Workflow Patching (in addition to Versioning)

You can now upgrade workflow code even when workflows are actively running.

With patching, you insert a patch point in your workflow. New executions follow the updated code path, while existing workflows resume safely using the original logic. This is especially important for workflows that run for days or weeks.

Read the docs: TypeScript, Python, Go, and Java.

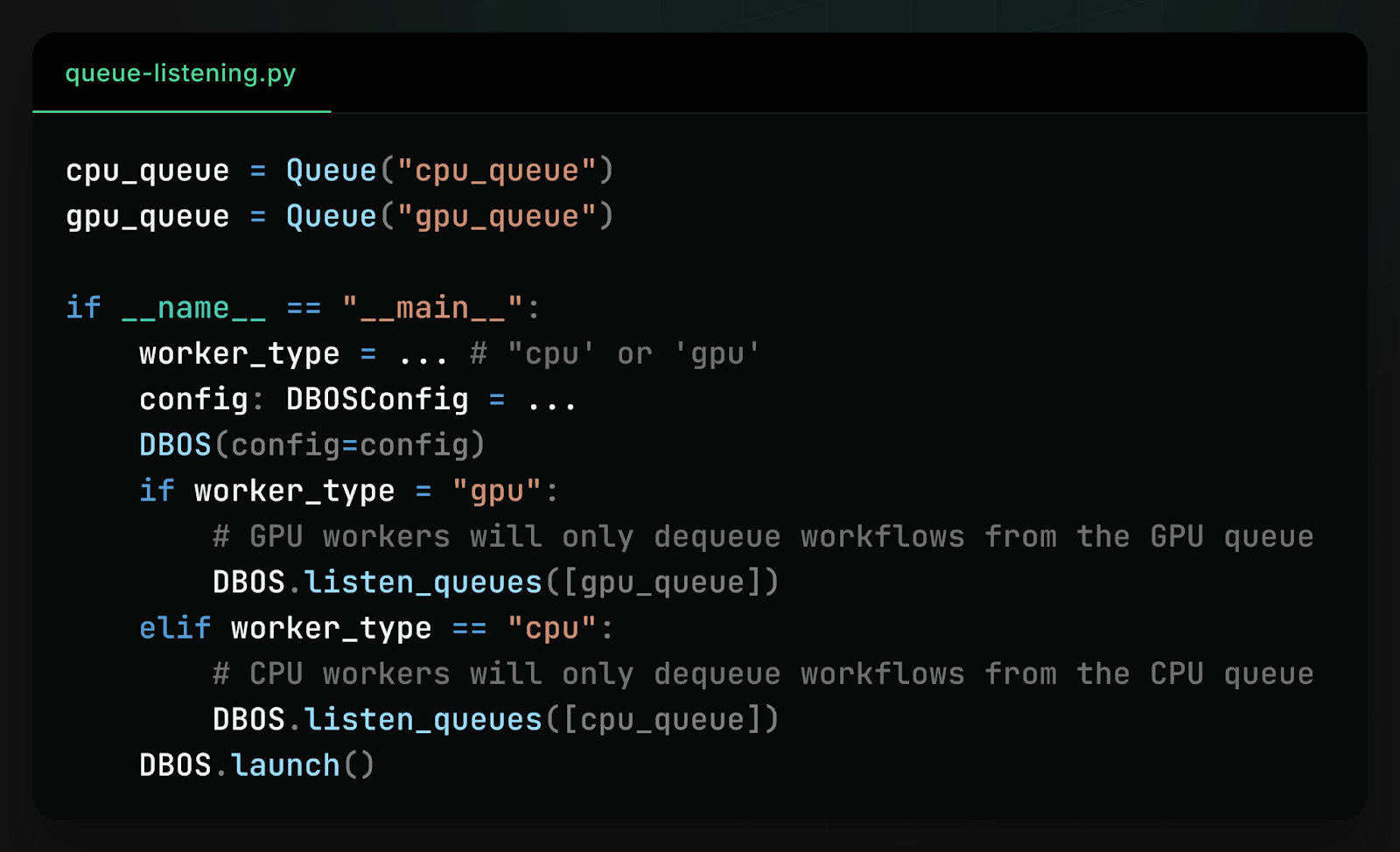

Explicit Queue Listening

You can now configure exactly which queues each DBOS process listens to.

This is particularly useful for heterogeneous workers:

- GPU-heavy workloads on dedicated machines

- CPU-intensive tasks on separate workers

- Isolated queues for different tenants or priorities

Read the docs: TypeScript, Python, Go, and Java.

Workflow Lineage Tracking

Workflow parent/child relationships are now recorded and indexed, making it much easier to navigate complex trees of nested workflows.

Upgrade to the latest version of the DBOS Transact library to access the updated workflow status schema: https://docs.dbos.dev/explanations/system-tables#dbosworkflow_status

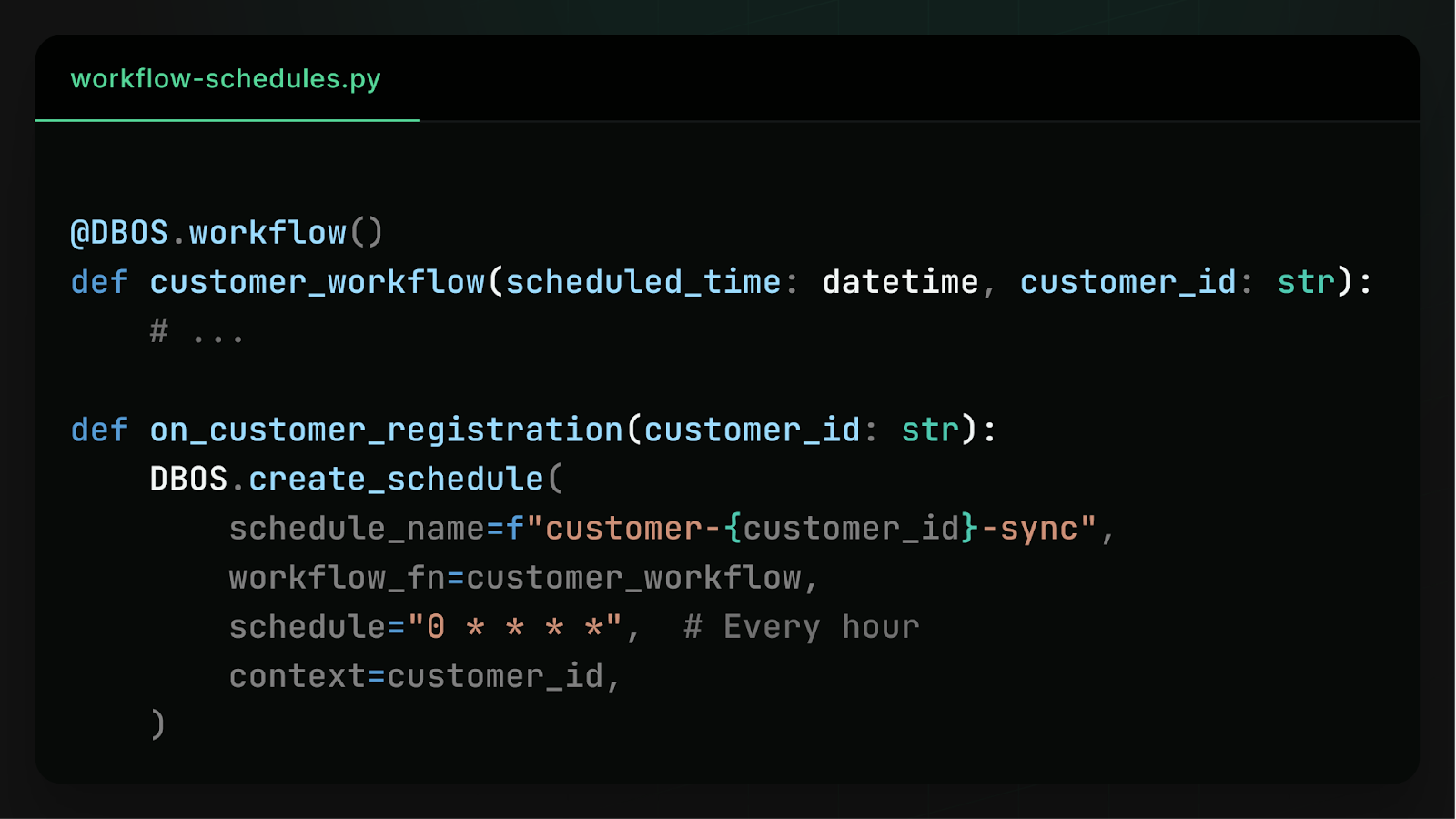

Dynamic Workflow Scheduling

Workflow schedules are now stored in Postgres, allowing you to:

- Create schedules dynamically at runtime

- View and update schedules

- Backfill missed runs

- Manually trigger executions

This is especially useful when onboarding customers and automatically provisioning recurring jobs (e.g., periodic data syncs).

Read the docs: TypeScript and Python.

Build durable workflows with Kotlin

DBOS now supports Kotlin!

You can run DBOS directly in your Kotlin applications using the new Spring Boot starter:

https://github.com/dbos-inc/dbos-demo-apps/tree/main/kotlin/dbos-starter

Agent Skills

DBOS is now available on skills.sh

Teach your coding agent (Claude Code, Codex, Antigravity, etc.) how to write durable workflows with a single command:

~/ npx skills add dbos-inc/agent-skills

New Features in Conductor

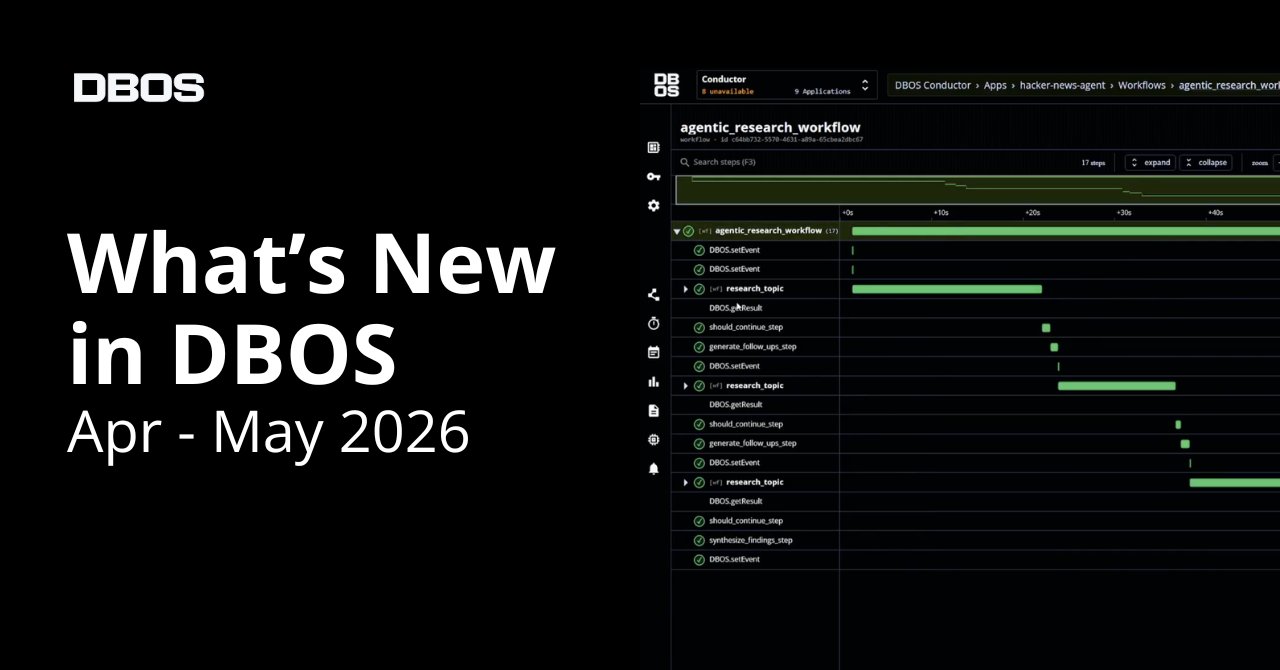

DBOS MCP Server - Agentic durable workflow troubleshooting

We launched the DBOS MCP server, giving AI agents direct access to workflow troubleshooting tools such as:

- list workflows

- get workflow

- list workflow steps

- fork workflow

This allows agents to inspect what actually happened during failures and help diagnose and fix issues. Learn more in this blog post.

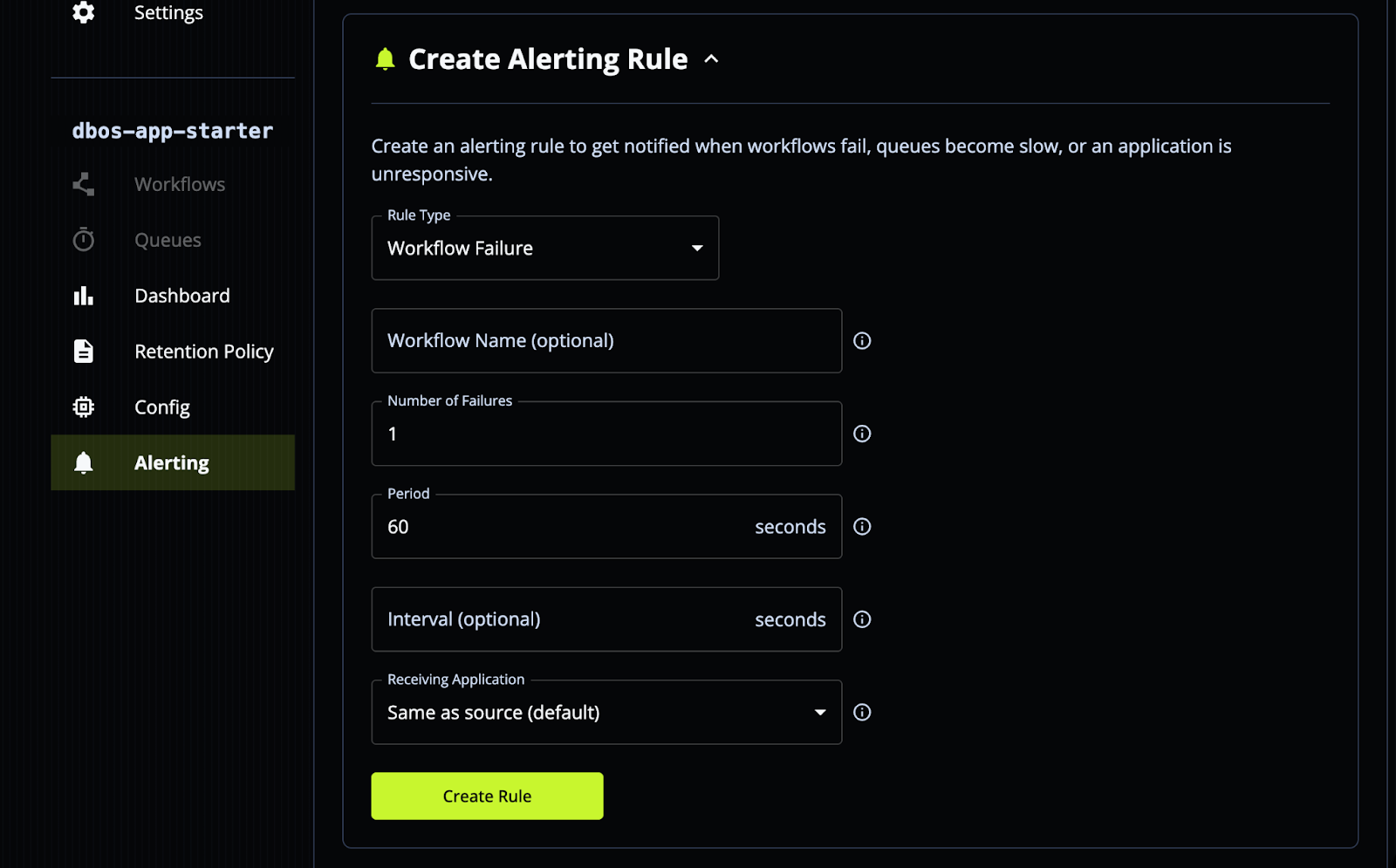

Custom Workflow Alerting

You can now configure alerts for production issues, including:

- Excessive workflow failures

- Workflows stuck in queues

- Application becomes unresponsive

Alerts can be forwarded to any of your applications, which can then integrate with services like Slack or PagerDuty. This makes sure you;re notified immediately when something goes wrong in production.

Requires at least a DBOS Teams plan.

Docs: https://docs.dbos.dev/production/alerting

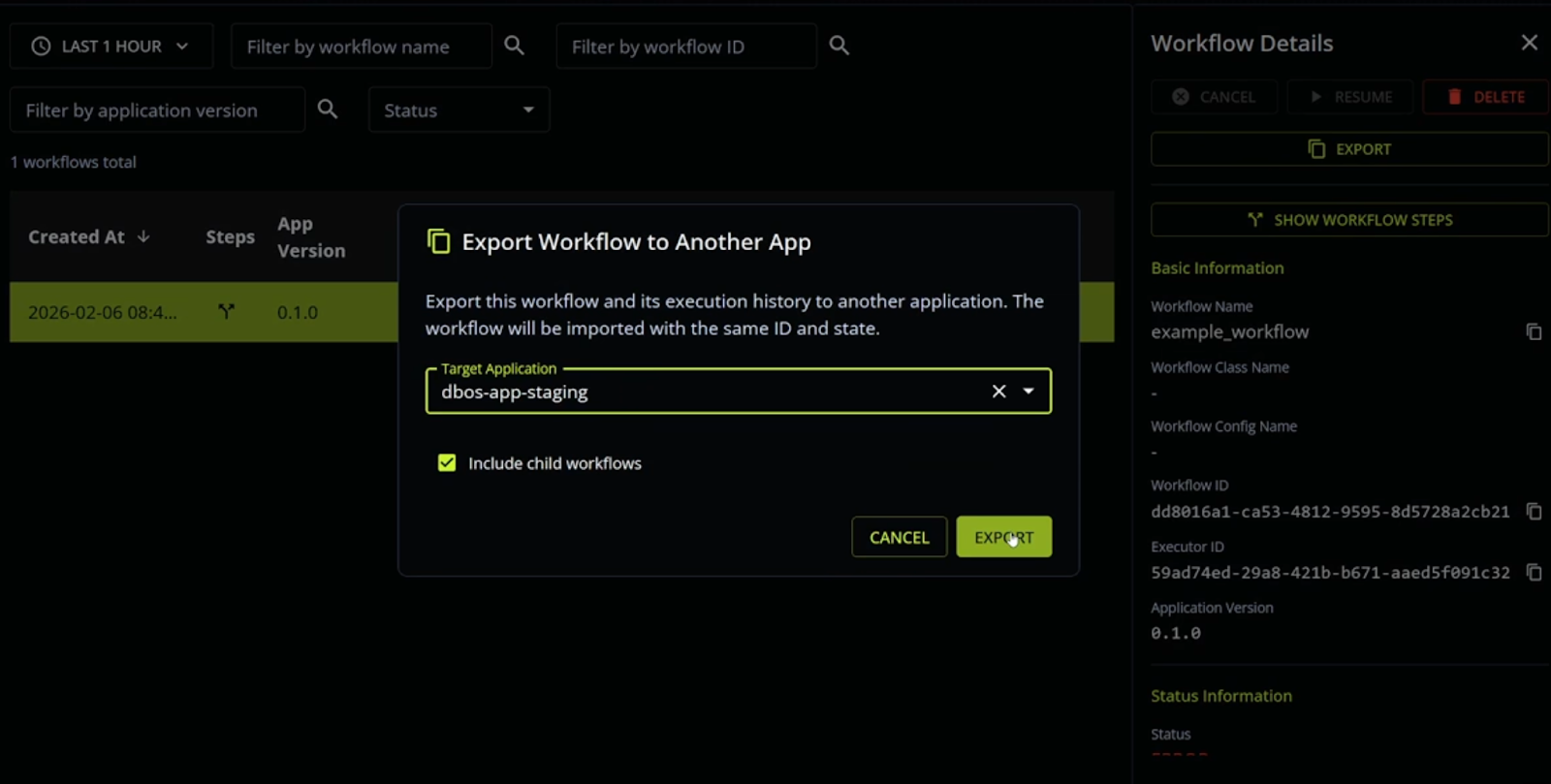

Workflow Export

You can now copy and paste a workflow between environments.

A common use case: exporting a workflow from production to development to reproduce and debug unexpected errors.

Self-Hosted Conductor

Run the entire DBOS stack anywhere, including on your laptop or in air-gapped on-prem infrastructure.

Self-hosted Conductor is released under a proprietary license. Commercial or production use requires a paid license key.

Docs: https://docs.dbos.dev/production/hosting-conductor

Durable Execution Metrics Dashboard

Monitor real-time workflow metrics, including:

- Workflow and step execution frequency

- Queue lengths

- Operational trends

This provides immediate visibility into system health and performance.

New Partnerships and Integrations

Vercel + Supabase + DBOS Template

We released a production-ready template for running reliable background jobs in a Vercel app.

Pattern:

- Write tasks as DBOS workflows (plain TypeScript)

- Enqueue them from your app

- Vercel functions automatically execute them

- Supabase/Postgres serves as the only backend

No separate orchestrator required.

This works especially well for:

- AI agents

- File or data processing

- Background jobs in serverless environments

Template: https://vercel.com/templates/template/vercel-dbos-integration

Docs: https://docs.dbos.dev/integrations/vercel

OpenAI Agents SDK

DBOS now integrates with the OpenAI Agents SDK. You can run agents with durable execution backed by SQLite or Postgres.

This enables agents to:

- Recover automatically from restarts and failures

- Run long-lived workflows with human-in-the-loop steps

- Execute parallel tool calls

- Orchestrate multiple agents

- Explicitly cancel, resume, or fork workflows

- Automatically persist state for auditing and observability

DBOS embeds as a lightweight library, no external orchestrator required.

Official OpenAI docs: https://openai.github.io/openai-agents-python/running_agents/#dbos

Open source repo: https://github.com/dbos-inc/dbos-openai-agents

DBOS docs: https://docs.dbos.dev/integrations/openai-agents

Pydantic AI Integration

We also published a joint blog post with the Pydantic team: https://pydantic.dev/articles/pydantic-ai-dbos

The core idea: when an AI agent crashes halfway through a workflow, you shouldn't have to start over. With Pydantic AI + DBOS, agent runs are automatically checkpointed to Postgres.

We built a multi-agent deep research platform to demonstrate:

- Planning

- Parallel web search

- Synthesis

All durable. All observable with Logfire.

Learn More about Durable Workflow Orchestration

If you like making systems reliable, we'd love to hear from you. At DBOS, our goal is to make durable workflows as lightweight and easy to work with as possible. Check it out:

- Quickstart: https://docs.dbos.dev/quickstart

- GitHub: https://github.com/dbos-inc

- Discord community: https://discord.gg/eMUHrvbu67