Since my last “what’s new” post, the product team has been very focused on two key areas: interoperability and privacy-preserving operations. We introduced cross-language workflow execution, deeper integration with the Postgres ecosystem, workflow delay scheduling, a new metadata-only mode for DBOS Conductor strict privacy requirements on Conductor, and expanded the DBOS ecosystem with powerful new integrations. Here’s a summary of what’s new:

New in DBOS Transact open source libraries

- Cross-language interoperation

- Improved application versioning

- Enqueue workflows from PostgreSQL UDFs and triggers

- DBOS durable primitives for concurrent workflows and steps

- Workflow delay scheduling

- Automatic backfilling cron schedules

- Improved workflow fork capabilities

New in DBOS Conductor

- Bulk workflow operations

- Executor custom metadata

- Metadata-only mode

- Workflow Events/Notifications/Streams Details

New DBOS integrations

- LlamaIndex agent workflows integration

- Databricks partnership

Read on for more details on each of these enhancements.

New Features in DBOS Transact

Cross-language Workflow Interoperation

Break down language silos across your stack. You can now use a DBOS client in TypeScript, Python, Java, Golang, or PL/pgSQL to interact with workflows running in any other language. This means you can easily enqueue, manage, or send messages to workflows across different environments.

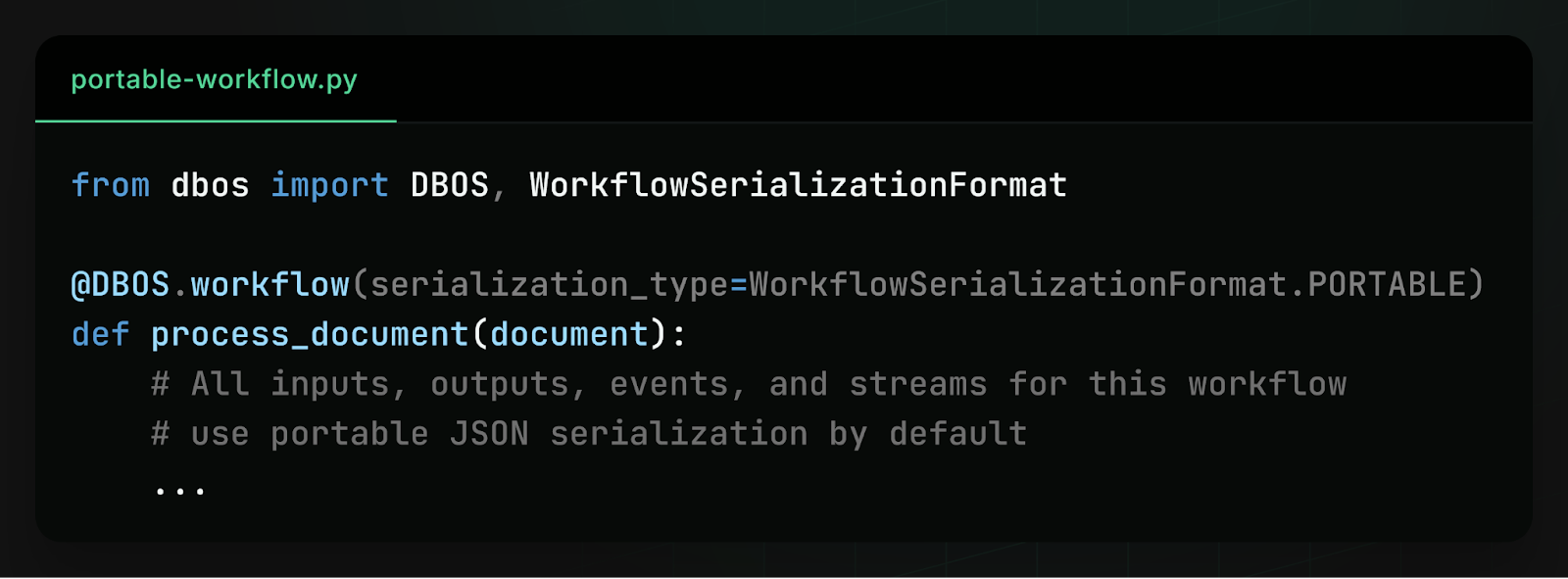

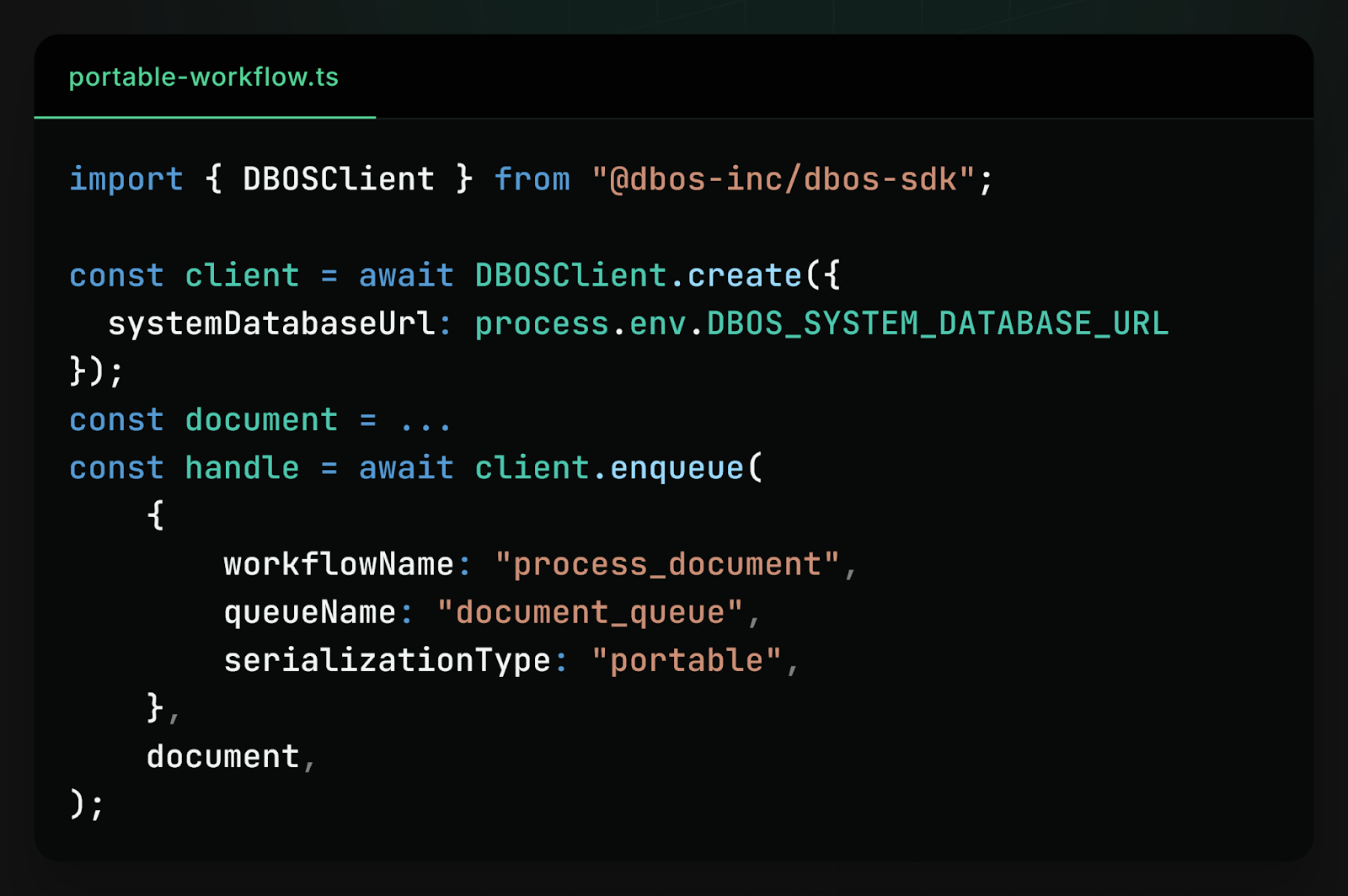

For example, declare a document processing workflow in Python and mark it as "portable" across languages.

Then, enqueue a document for processing from a TypeScript application.

This unlocks:

- Polyglot architectures without additional coordination services

- Gradual migration between languages

- Shared workflow infrastructure across teams

Read the docs: https://docs.dbos.dev/explanations/portable-workflows

We also wrote a blog post: https://www.dbos.dev/blog/making-languages-interoperable-with-postgres

Improved DBOS Application Versioning

Application versions are now persisted in the database, making them observable and controllable at runtime.

New public APIs:

- list_application_versions: Returns all versions, newest first

- get_latest_application_version: Returns the latest version

- set_latest_application_version: Sets a version as latest

This API makes it easier to route new workflows to the latest version, perform blue-green deployments, or roll back safely to a previous version.

Read the docs: TypeScript, Python (Go and Java support coming soon)

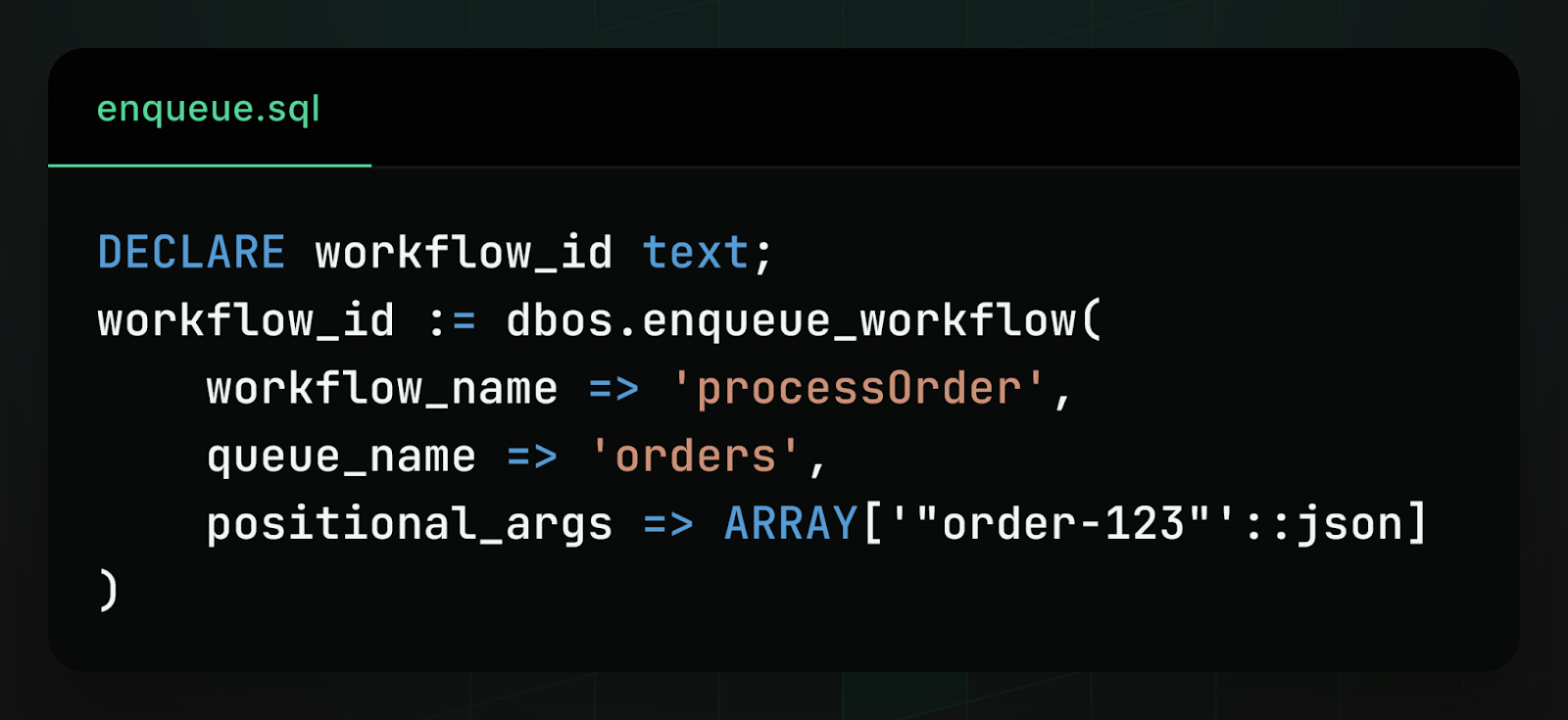

Enqueue Workflows From PostgreSQL UDFs and Triggers

To bring workflows closer to your data, we released a PostgreSQL workflows client. You can enqueue workflows or send messages directly from Postgres simply by calling a SQL function.

Key use cases:

- Database Triggers: Process new data instantly by creating a trigger that starts a workflow upon every table insert, guaranteeing exactly-once processing for each new row.

- Transactional Outbox: Enqueue workflows as part of a broader database transaction to ensure your workflow enqueue is atomic with your database updates.

Read the docs: https://docs.dbos.dev/explanations/system-tables#system-database-functions

We also wrote a blog post about how we implemented it: https://www.dbos.dev/blog/running-durable-workflows-from-postgres-udfs

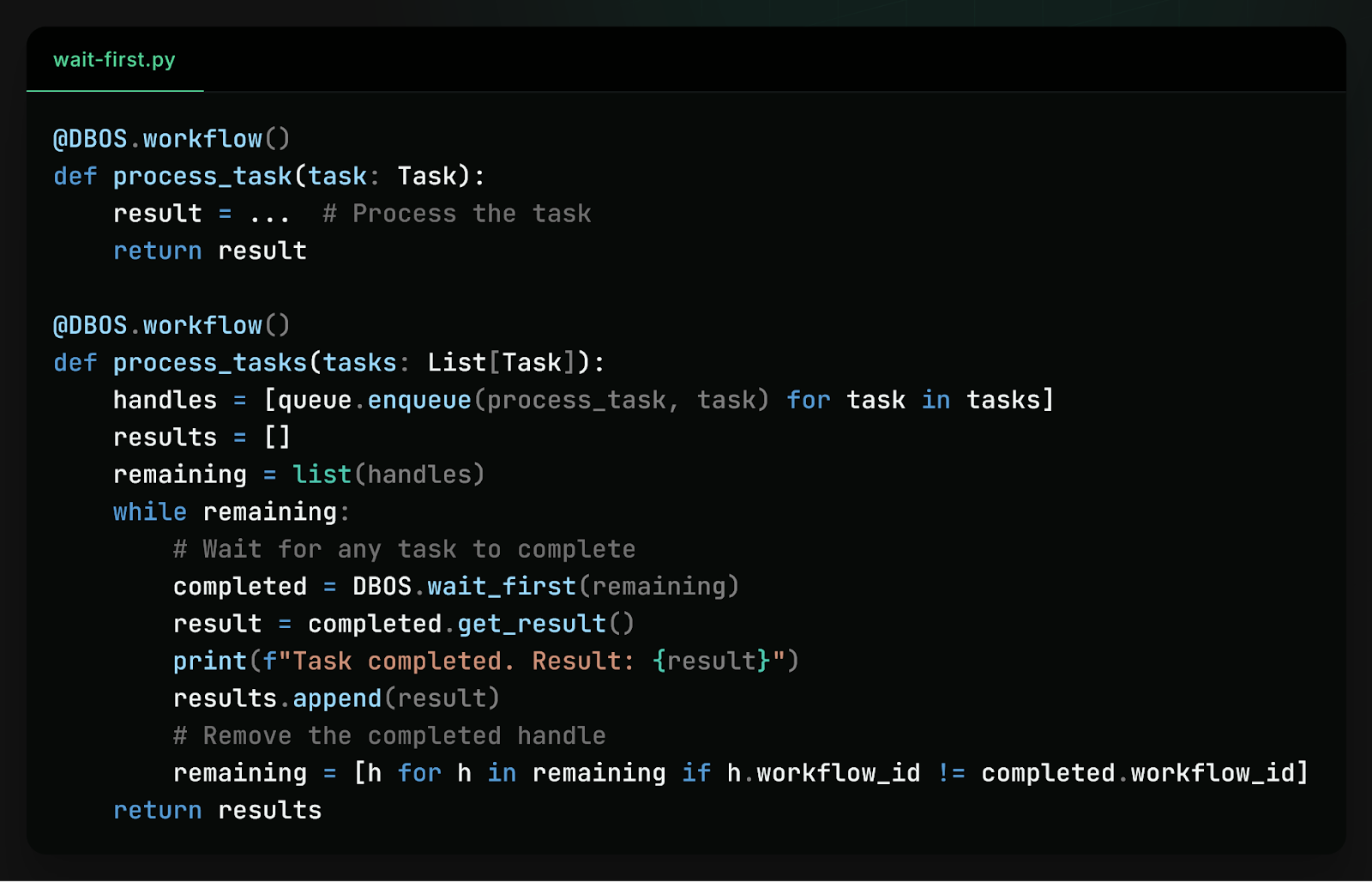

DBOS Durable Primitives For Concurrent Workflows And Steps

Tired of waiting for all tasks in a batch to finish before processing results? The new DBOS.waitFirst primitive allows you to durably wait for the first of multiple workflows to complete, so you can for example enqueue many workflows and take an action when each of them completes. It's useful if you need to fan-out and react to results as they arrive, instead of waiting for all tasks to finish.

Read the docs: Typescript, Python (Go and Java support coming soon)

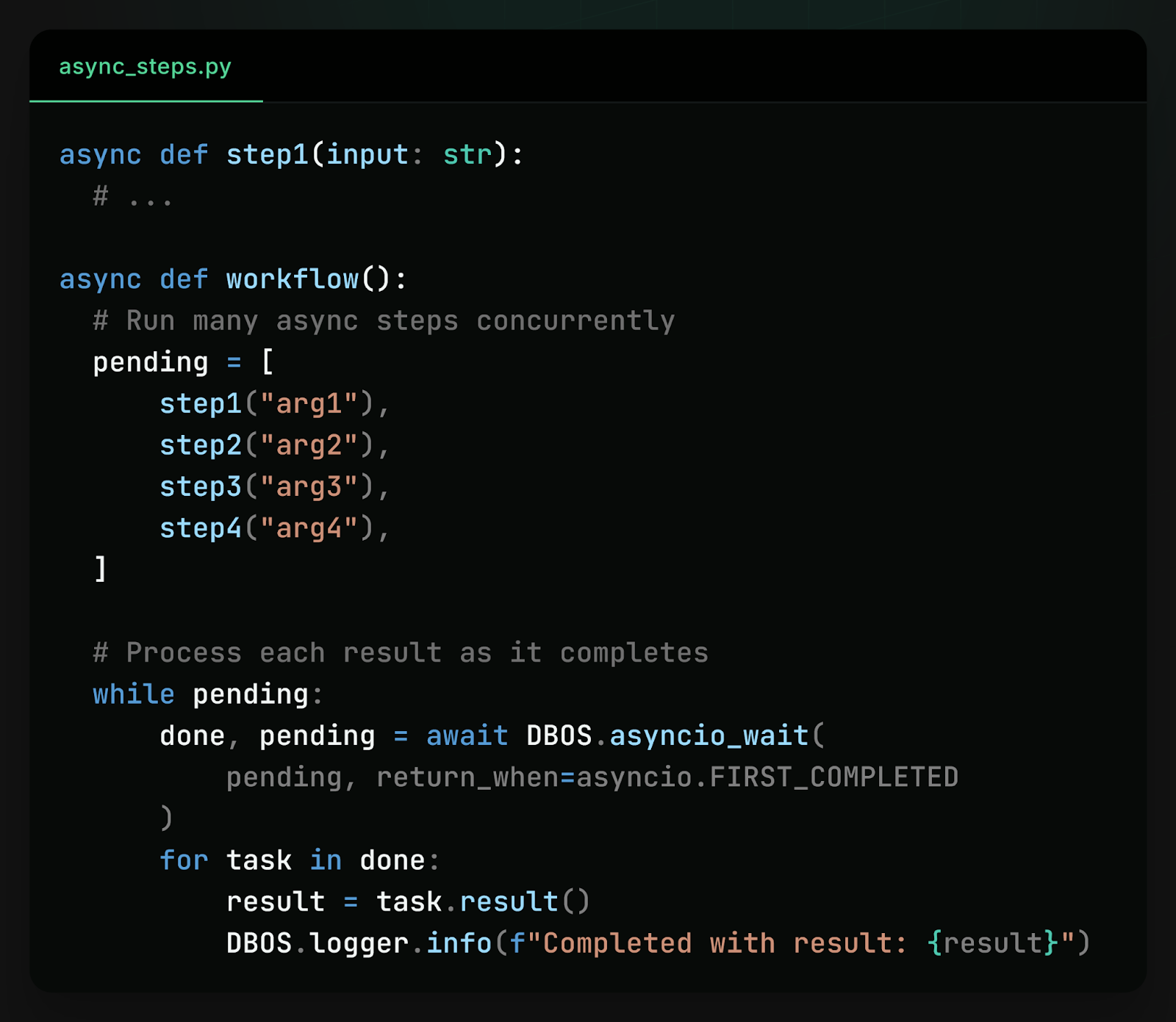

For concurrent steps, we added support for durably waiting for the first of multiple async steps to complete in Python (DBOS.asyncio_wait). It is a durable wrapper around asyncio.wait with the same interface and semantics. It checkpoints which futures are done vs. pending so the result is deterministic during workflow recovery. This allows you to retain the performance benefits of async execution while maintaining correctness under failure.

We also wrote a detailed blog post explaining how it works: https://www.dbos.dev/blog/async-python-is-secretly-deterministic

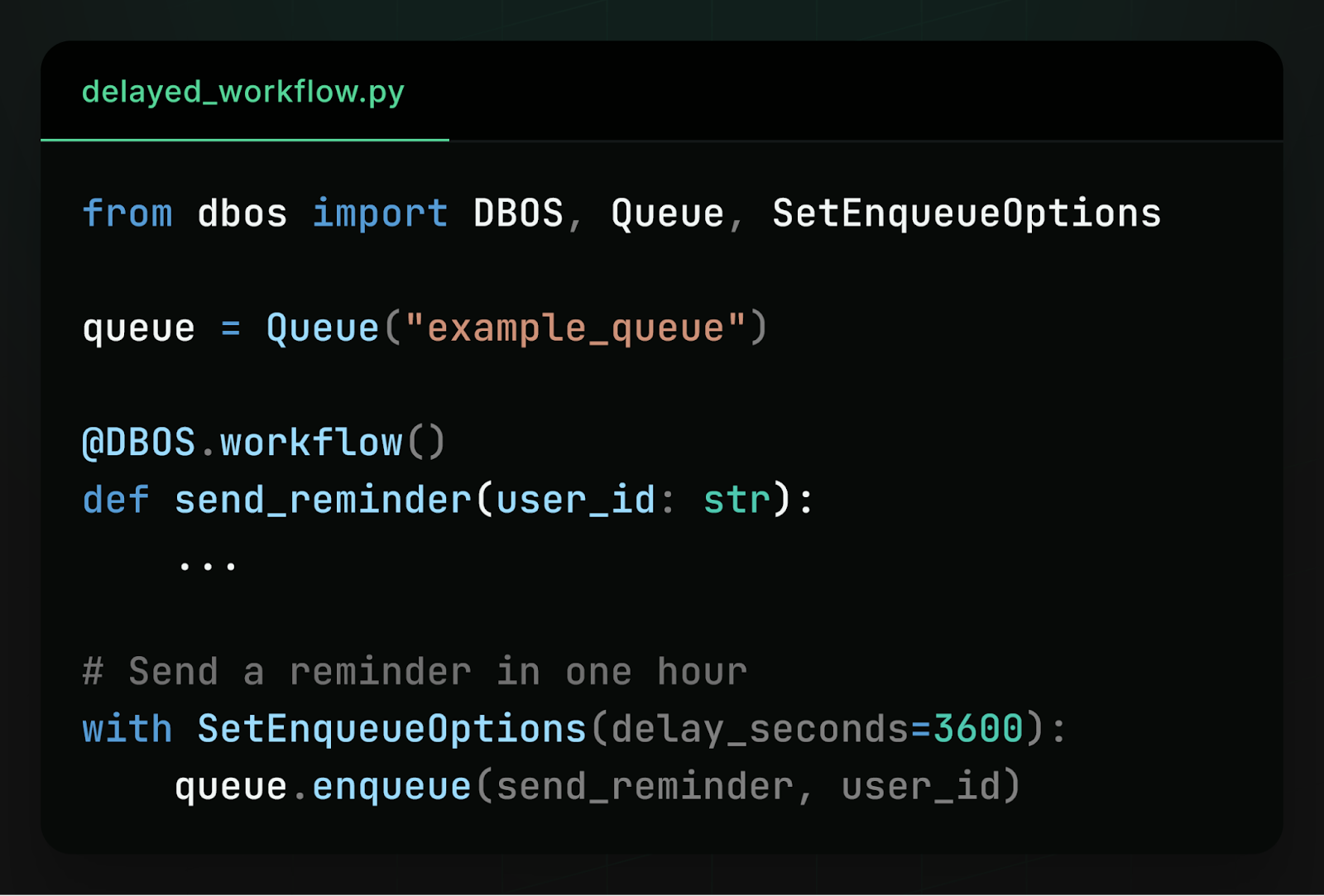

Durable Workflow Delay Scheduling

You can schedule a workflow to execute some time in the future (minutes, days, or weeks later).

The delay is persisted in the database and durable across restarts. You can also dynamically adjust a workflow's delay before execution.

Read the docs: TypeScript, Python (Go and Java support coming soon)

Automatic Backfilling Cron Schedules

Cron schedules now support time zones and automatic backfilling of missed runs. If your application is paused or offline, DBOS can automatically enqueue missed executions when it resumes.

You can enable this with automatic_backfill=True when creating a schedule.

Read the docs: TypeScript, Python (Go and Java support coming soon)

Improved Workflow Fork Capabilities

We expanded workflow forking with better control and observability.

New features:

- Filter workflows based on whether they were forked (was_forked_from). If you're forking workflows to recover from failures, this lets you filter out the original executions and only show the forked re-attempts.

- Improved support for forking workflows with child workflows. You can specify replacement_children to remap child workflow dependencies during fork. When the forked workflow encounters a step that starts a child workflow matching an original ID, it substitutes the replacement ID instead. This is useful when you need to fork a parent workflow that depends on the results of child workflows that have also been forked.

These improvements make it easier to recover from failures, re-run workflows with fixes, and debug complex workflow graphs.

Currently available in Python, other languages coming soon.

New Features in DBOS Conductor

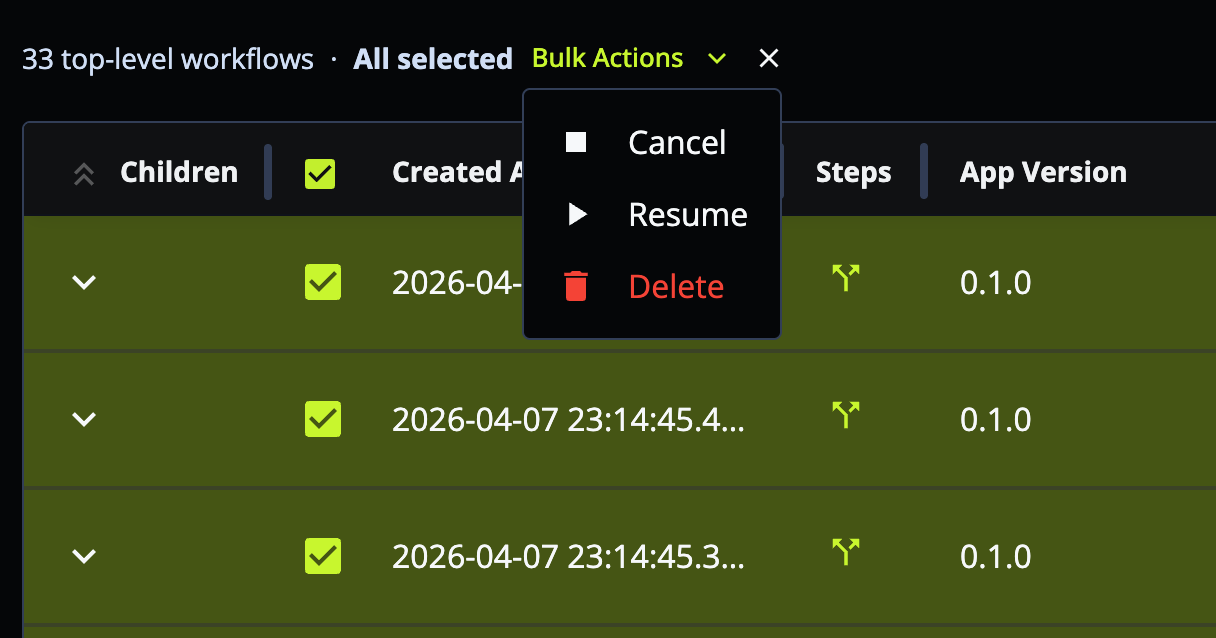

Bulk Workflow Operations

You can now execute bulk workflow operations, including cancel, resume, and delete, either programmatically or directly through the web UI. This is useful for sweeping operational tasks, such as canceling all workflows that have been stuck pending for the past hour.

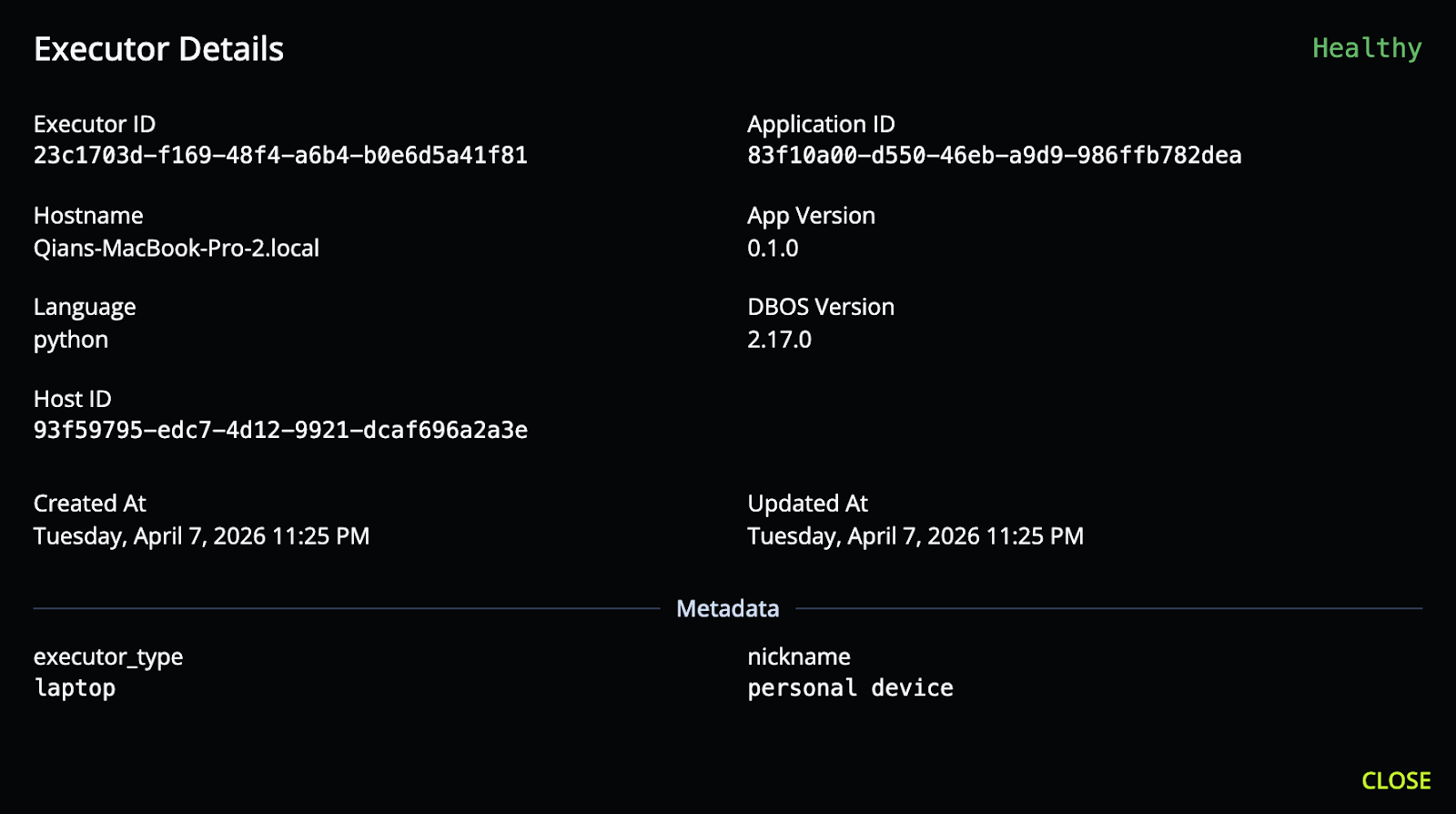

Executor Custom Metadata

Executors can now attach JSON metadata (e.g., region, instance type, environment), which is displayed in DBOS Conductor. This makes it easier to identify executors, debug issues across environments, and understand deployment topology.

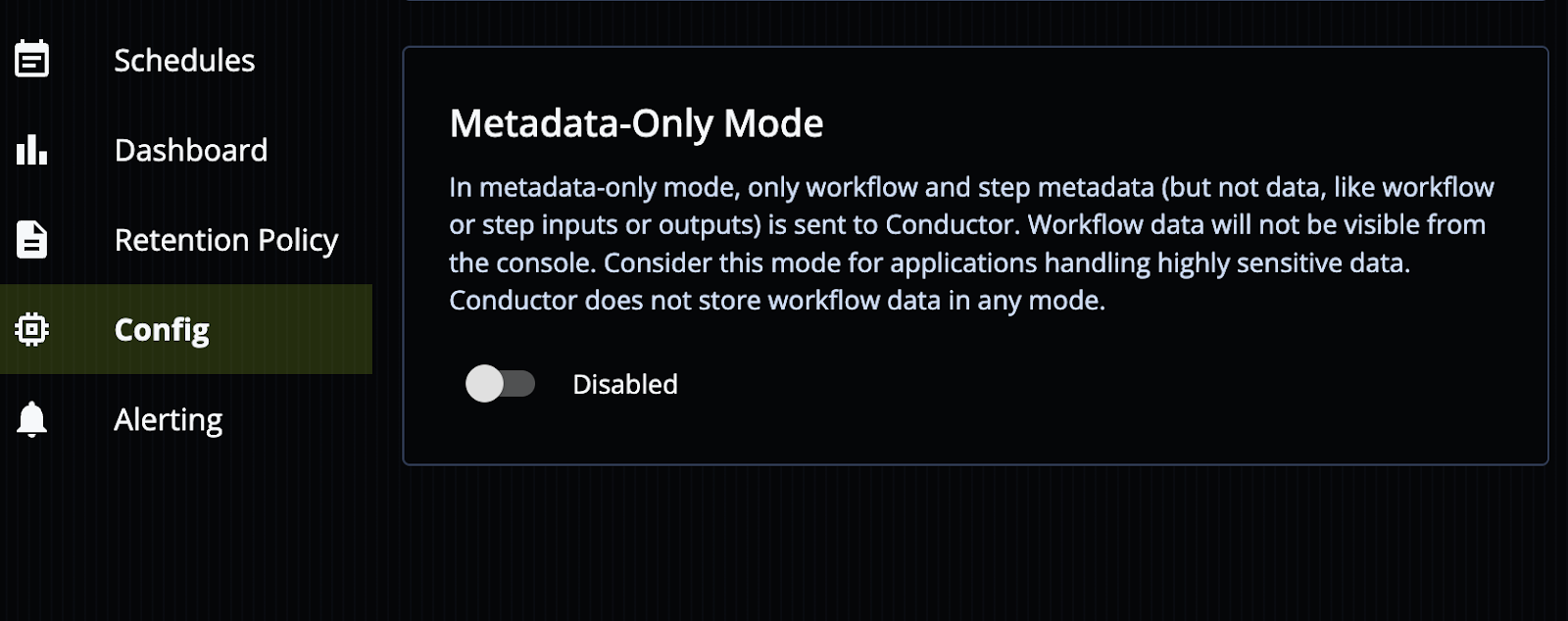

Metadata-only Mode

We added metadata-only mode to provide a strict privacy guarantee:

- Only workflow and step metadata (name, status, timestamps) are sent to Conductor

- Inputs and outputs remain fully private in your environment, not sent to the Conductor.

This is particularly useful for sensitive workloads, regulated environments, and air-gapped deployments.

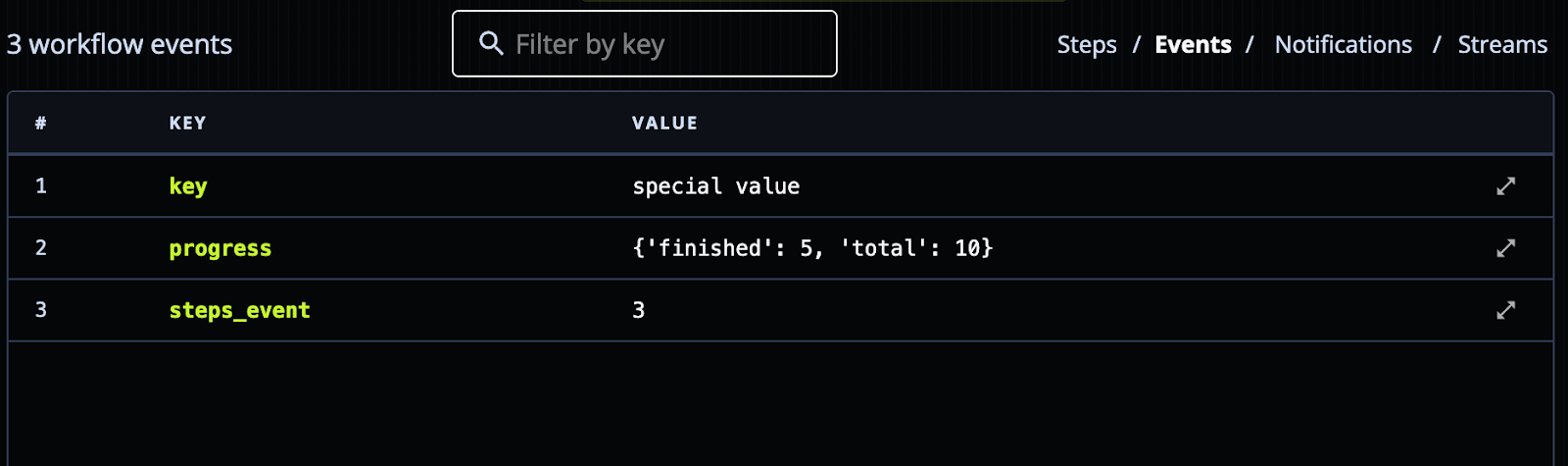

Workflow Inspection - See Events, Notifications, Streams Details

You can now inspect events, notifications, and streams associated with a workflow. It's useful for tracking workflow progress and debugging.

New Partnerships and Integrations

LlamaIndex Agent Workflows Integration

We introduced an integration with LlamaIndex for building durable agent workflows. By using the llama-agents-dbos package, every workflow transition is automatically persisted. This guarantees your long-running AI workflows survive crashes, restarts, and errors, resuming exactly where they left off without requiring you to write manual checkpointing or snapshot logic.

Integration highlights:

- Every step transition persists automatically.

- Zero external dependencies required (using SQLite), with the ability to scale to multi-replica deployments via Postgres.

- Built for replication: each replica owns its workflows, while Postgres coordinates across instances.

- Idle release frees up memory for long-running workflows waiting on human input.

- Built-in crash recovery automatically detects and relaunches incomplete workflows.

LlamaIndex docs: https://developers.llamaindex.ai/python/llamaagents/workflows/dbos/

DBOS docs: https://docs.dbos.dev/integrations/llamaindex

Databricks Technology Partner

We announced a technology partnership with Databricks, including an integration with Databricks Lakebase to help Agentic AI developers build agents that are fault tolerant and observable by default.

The integration embeds DBOS directly underneath agent workflows, providing fault tolerance and observability that runs entirely on your existing Databricks infrastructure.

A blog post on the Databricks Lakebase - DBOS integration: https://www.dbos.dev/blog/building-durable-agents-dbos-databricks

Learn More about Durable Workflow Orchestration

If you like making systems reliable, we'd love to hear from you. At DBOS, our goal is to make durable workflows as lightweight and easy to work with as possible. Check it out:

- Quickstart: https://docs.dbos.dev/quickstart

- GitHub: https://github.com/dbos-inc

- Discord community: https://discord.gg/eMUHrvbu67